-

Tool Calling Reliability for LLM Agents: Schemas, Validation, Retries (Production Checklist)

Tool calling is where most “agent demos” die in production. Models are great at writing plausible text, but tools require correct structure, correct arguments, and correct sequencing under timeouts, partial…

-

Agent Evaluation Framework: How to Test LLM Agents (Offline Evals + Production Monitoring)

If you ship LLM agents in production, you’ll eventually hit the same painful truth: agents don’t fail once-they fail in new, surprising ways every time you change a prompt, tool,…

-

Kimi K2.5: What It Is, Why It’s Trending, and How to Use It (Vision + Agents)

Kimi K2.5 is trending because it’s not just “another LLM.” It’s being positioned as a native multimodal model (text + images, and in some setups video) with agentic capabilities—including a…

-

Enterprise Agent Governance: How to Build Reliable LLM Agents in Production

Enterprise Agent Governance is the difference between an impressive demo and an agent you can safely run in production. If you’ve ever demoed an LLM agent that looked magical—and then…

-

Why Agent Memory Is the Next Big AI Trend (And Why Long Context Isn’t Enough)

Agent memory is emerging as the missing layer for reliable AI agents. Learn why long context windows are not enough and how memory capture, compression, retrieval, and consolidation work.

-

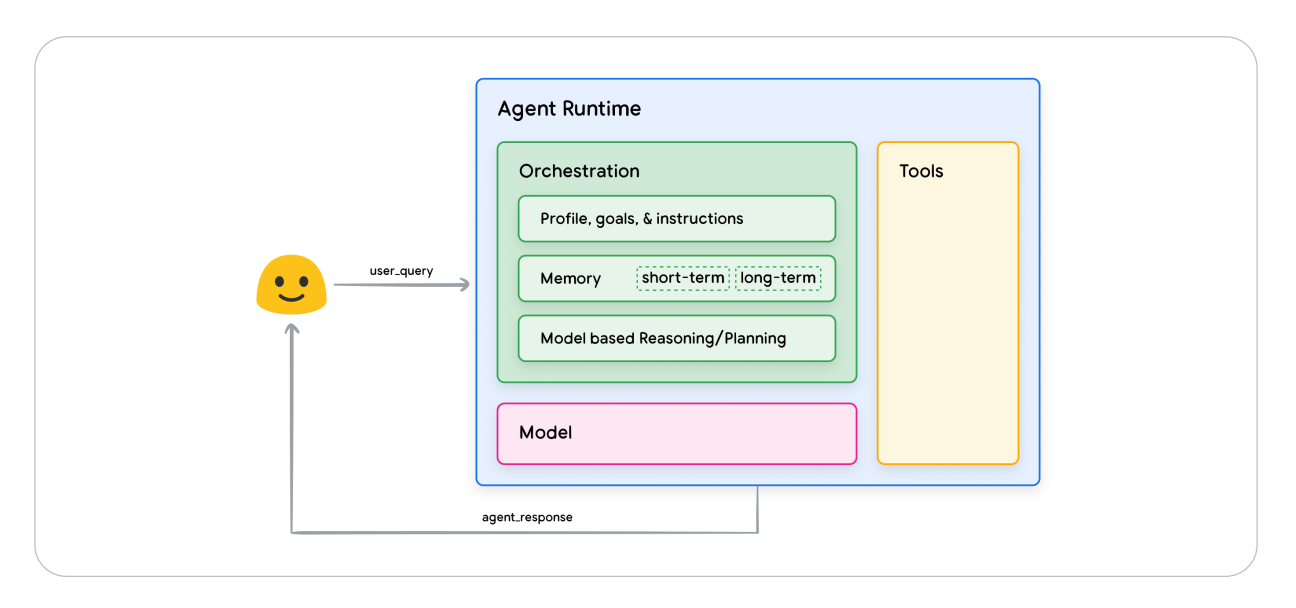

AI Agents by Google: Revolutionizing AI with Reasoning and Tools

TL;DR Ai Agents By Google is mostly about making agent behavior predictable and auditable. Make tools safe: schemas, validation, retries/timeouts, and idempotency. Ground answers with retrieval (RAG) and measure reliability…