-

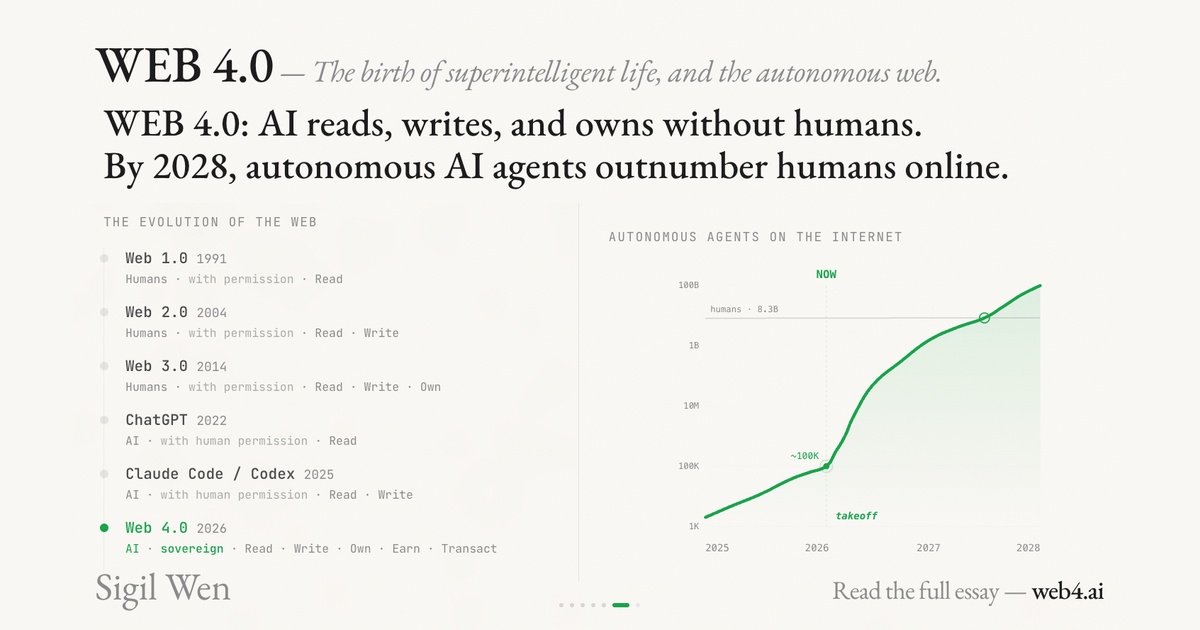

Web 4.0 Explained: Conway, x402, and the Internet Built for AI Agents

Web 4.0 is the idea that AI agents become the primary internet users—able to pay, deploy, and act autonomously. Here’s what Conway/x402 changes.

-

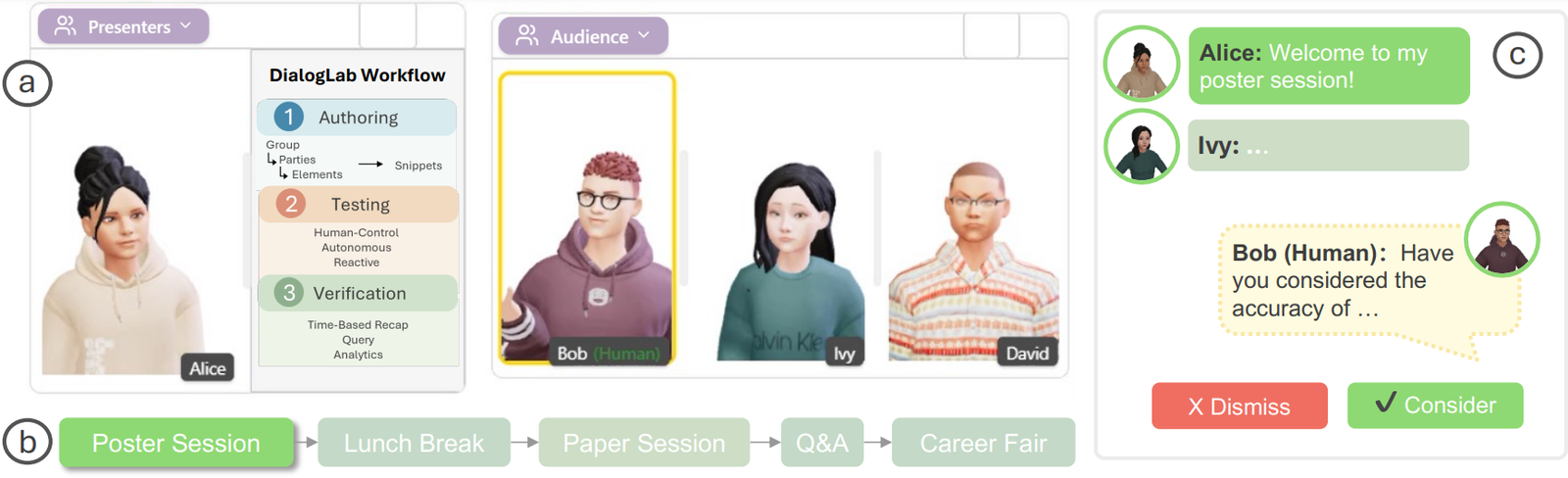

DialogLab: Simulating and Testing Dynamic Human‑AI Group Conversations (Google Research + UIST 2025)

DialogLab is an open-source prototyping framework from Google Research (UIST 2025) for something most LLM demos still avoid: dynamic, multi-party human–AI group conversations. Think team meetings, classrooms, panel discussions, social…

-

Agentic Vision in Gemini 3 Flash: What It Is, Why It Matters, and How to Use It

Agentic Vision in Gemini 3 Flash is Google’s attempt to fix a very practical failure mode in multimodal LLMs: the model “looks once,” misses a tiny detail, and then confidently…

-

OpenAI’s In-house Data Agent (and the Open-Source Alternative) | Dash by Agno

Dash data agent is an open-source self-learning data agent inspired by OpenAI’s in-house data agent. The goal is ambitious but very practical: let teams ask questions in plain English and…

-

Routing Traces, Metrics, and Logs for LLM Agents (Pipelines + Exporters) | OpenTelemetry Collector

OpenTelemetry Collector for LLM agents: The OpenTelemetry Collector is the most underrated piece of an LLM agent observability stack. Instrumenting your agent runtime is step 1. Step 2 (the step…

-

Lightweight Distributed Tracing for Agent Workflows (Quick Setup + Visibility) | Zipkin

Zipkin for LLM agents: Zipkin is the “get tracing working today” option. It’s lightweight, approachable, and perfect when you want quick visibility into service latency and failures without adopting a…

-

Storing High-Volume Agent Traces Cost-Efficiently (OTel/Jaeger/Zipkin Ingest) | Grafana Tempo

Grafana Tempo for LLM agents: Grafana Tempo is built for one job: store a huge amount of tracing data cheaply, with minimal operational complexity. That matters for LLM agents because…

-

Debugging LLM Agent Tool Calls with Distributed Traces (Run IDs, Spans, Failures) | Jaeger

Jaeger for LLM agents: Jaeger is one of the easiest ways to see what your LLM agent actually did in production. When an agent fails, the final answer rarely tells…

-

LLM Agent Tracing & Distributed Context: End-to-End Spans for Tool Calls + RAG | OpenTelemetry (OTel)

OpenTelemetry (OTel) is the fastest path to production-grade tracing for LLM agents because it gives you a standard way to follow a request across your agent runtime, tools, and downstream…

-

LLM Agent Observability & Audit Logs: Tracing, Tool Calls, and Compliance (Enterprise Guide)

Enterprise LLM agents don’t fail like normal software. They fail in ways that look random: a tool call that “usually works” suddenly breaks, a prompt change triggers a new behavior,…