-

What is Infinite Retrieval, and How Does It Work?

TL;DR Infinite Retrieval is mostly about making agent behavior predictable and auditable. Make tools safe: schemas, validation, retries/timeouts, and idempotency. Ground answers with retrieval (RAG) and measure reliability with evals.…

-

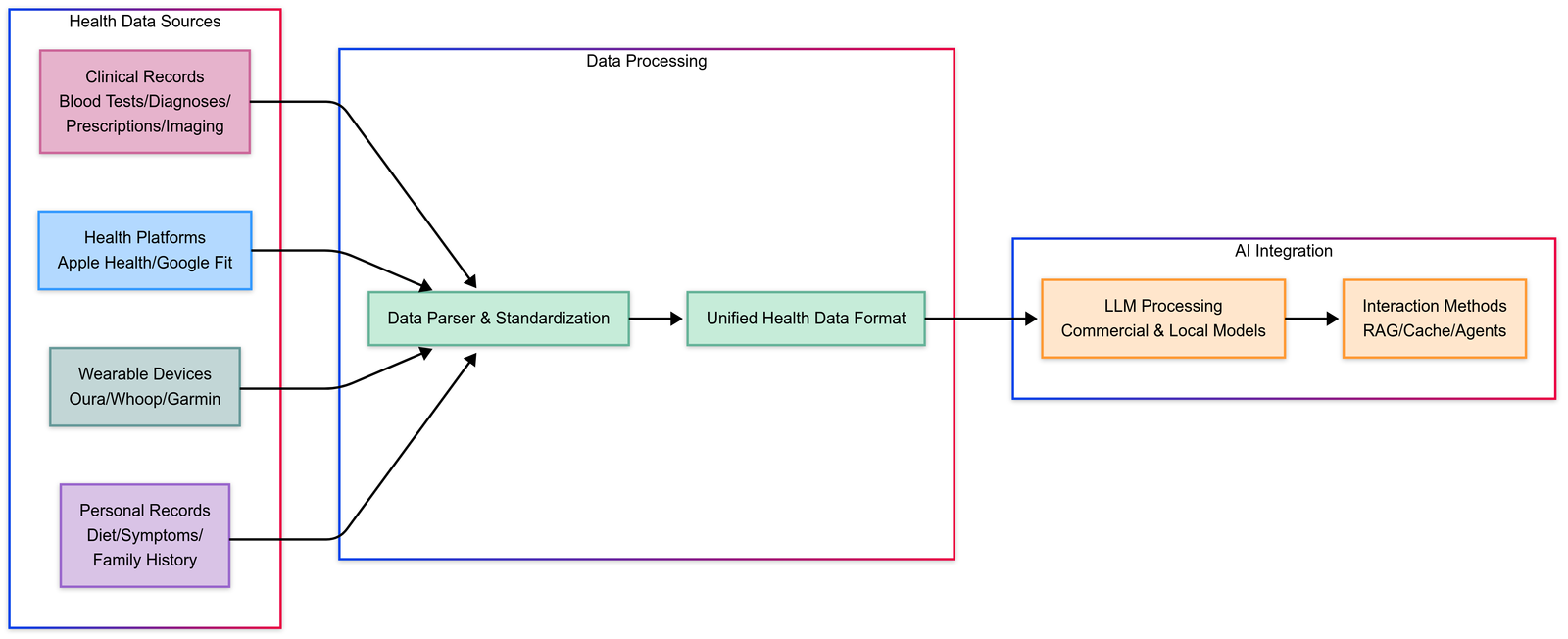

Build Your Own and Free AI Health Assistant, Personalized Healthcare

TL;DR Build Your Own Free is mostly about making agent behavior predictable and auditable. Make tools safe: schemas, validation, retries/timeouts, and idempotency. Ground answers with retrieval (RAG) and measure reliability…

-

Enterprise Agentic RAG Template by Dell AI Factory with NVIDIA

TL;DR Enterprise Agentic Rag is mostly about making agent behavior predictable and auditable. Make tools safe: schemas, validation, retries/timeouts, and idempotency. Ground answers with retrieval (RAG) and measure reliability with…

-

NVIDIA NV Ingest for Complex Unstructured PDFs, Enterprise Documents

TL;DR Nvidia Nv Ingest is mostly about making agent behavior predictable and auditable. Make tools safe: schemas, validation, retries/timeouts, and idempotency. Ground answers with retrieval (RAG) and measure reliability with…

-

Cache-Augmented Generation (CAG): Superior Alternative to RAG

TL;DR Cache-Augmented Generation is mostly about making agent behavior predictable and auditable. Make tools safe: schemas, validation, retries/timeouts, and idempotency. Ground answers with retrieval (RAG) and measure reliability with evals.…

-

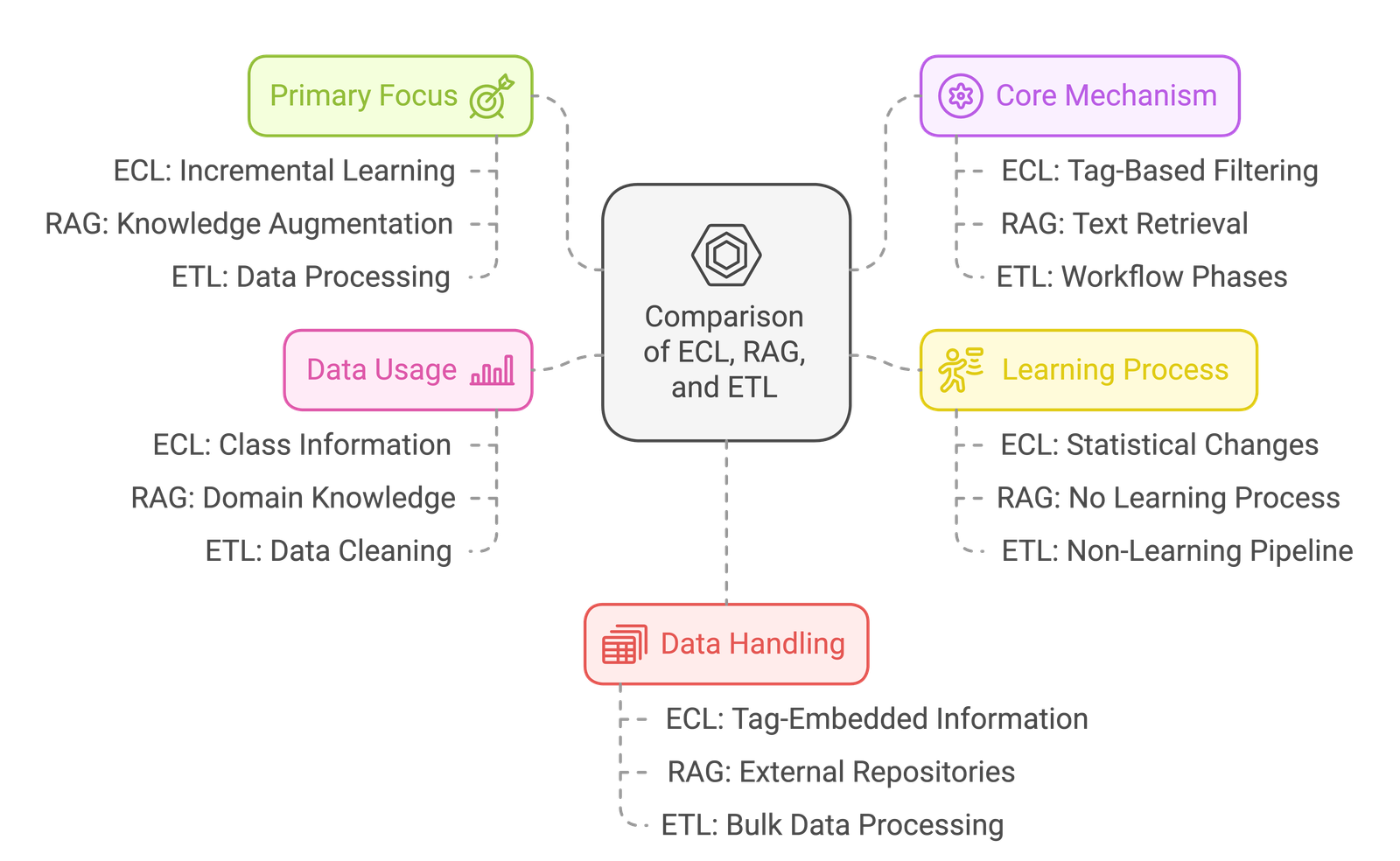

ECL vs RAG, What is ETL: AI Learning, Data, and Transformation

TL;DR Ecl Vs Rag is mostly about making agent behavior predictable and auditable. Make tools safe: schemas, validation, retries/timeouts, and idempotency. Ground answers with retrieval (RAG) and measure reliability with…